Summarize with AI

Your future customers are complaining about their problems right now. In public. For free. Most founders never see it because they're waiting to run customer interviews — but you can't interview customers you don't have yet.

The good news: you don't need a single user to start finding real customer pain points. Every community platform is an archive of unfiltered frustration, and knowing where to look turns market research from a six-hour Reddit spiral into a repeatable, 30-minute workflow.

This guide covers five channels where pain points surface organically, how to tell signal from noise, and how to turn raw complaints into decisions you can act on today.

Why Standard Pain Point Advice Fails Founders

Every framework you've read says the same thing: "Just interview your customers."

That advice works if you already have customers. If you're pre-PMF — building something new, still finding your first users — it's useless. You're stuck in a loop: you need pain point data to build the right thing, but you need a product to find customers to interview.

The real insight that breaks this loop: your target customers are already describing their problems online. They're posting on Reddit, complaining in YouTube comment sections, and leaving one-star reviews on the tools they're using as a workaround. That conversation is happening whether you show up or not.

Your job is to find it.

The 5 Places Real Customer Pain Points Live

Reddit Communities

Reddit is the highest-signal pain point database available to any founder, and it costs nothing but time.

The reason it works: Reddit's anonymity removes the social pressure to sound reasonable. Users describe failed purchases in forensic detail, name the exact feature that made them cancel a subscription, and argue about which tool is actually worth paying for. They have no incentive to be polite or vague.

Start with communities that match your ICP. For B2B SaaS founders, r/SaaS, r/startups, r/microsaas, and r/indiehackers are the obvious starting points. Then go narrower: if you're building for legal ops, r/legaladvice will surface raw customer pain that r/SaaS never will. Niche vertical subreddits often have more candid, specific complaints than the broad general ones. For a full breakdown of how to map subreddits to your ICP and build an audience profile from what you find, see the Reddit Audience Research Guide.

Once you're in the right communities, look at comment sections more than post titles. Comments are where the real emotion lives — the replies where someone describes the workaround they've been doing for months, or the reply that says "I've been looking for exactly this for two years." Posts describe a situation. Comments reveal frustration.

Frequency signal: One post saying something is broken is an anecdote. The same complaint appearing across five different threads, started by different people, at different times, is a validated pain point. Upvotes tell you how many people agreed enough to click.

If you want to skip manual subreddit discovery, the free subreddit finder gives you a ranked list of communities relevant to your product category.

YouTube Comment Sections

YouTube comment sections on competitor product reviews are one of the most underused customer research channels.

The signal is concentrated: people who watch a 10-minute product review and leave a comment are high-intent. They're evaluating options. When they complain, they're describing gaps in the market. Filter for 1–3 star reviews and read what people say is missing or broken.

Tutorial video comments are equally useful. When someone can't figure something out, they say so. Their confusion is your product opportunity.

Search [competitor name] review or [category] tutorial and spend 20 minutes reading comments. You'll surface real frustrations from real users faster than any survey.

App Store and G2 / Capterra Reviews

For B2B SaaS, G2 and Capterra one-star reviews are a direct window into what your competitors are failing to deliver.

The format forces specificity. Reviewers explain what went wrong, what feature was missing, what made them switch. Filter by "cons" and "what's missing" across 10–20 reviews of competitor tools and you'll see patterns emerge fast.

For consumer products, App Store reviews at 1–2 stars work the same way. Sort by most recent to catch emerging complaints before competitors have responded to them. For a broader playbook on finding B2B customers across platforms beyond review sites, see 10 Proven Methods to Find B2B Customers on Social Media.

X (Twitter) Threads and Replies

Search on X for phrases that signal frustration or unmet need:

[category] is frustratingI wish [tool] couldwhy is there no [thing]anyone know a way to

Quote-tweets are especially valuable — they surface secondary reactions to problems, which often reveal adjacent pain that the original post didn't capture. Low-follower accounts tend to complain most honestly; they're not performing for an audience.

The weakness of X for research is that threads are short and context-thin. Use it to detect signals, then verify the pattern on Reddit or review sites where people go into more detail.

Niche Slack and Discord Communities

Invite-only communities have higher signal than public platforms for one reason: the barrier to entry filters for people who are serious about the topic.

In a Slack community built around a specific workflow or industry, members share raw frustrations they'd never post publicly. Problems are described in context. Workarounds are shared as solutions. That context is gold for product research.

Many niche Slack communities have searchable history. Use it. Search for problem-adjacent phrases in #general, #tools, or #help channels and you'll find dense concentrations of honest pain.

If the community hosts work in public — troubleshooting threads, shared screenshots, live workflow questions — watch how members actually do things. The friction they've normalized, and never think to complain about explicitly, is often the highest-value pain to solve. People rarely articulate the thing they've been doing manually for two years. They just do it.

How to Turn Raw Complaints Into Usable Insights

Look for Frequency, Not Intensity

The loudest complaint isn't necessarily the most common one. A single post with heated language is anecdote. Ten people calmly expressing the same frustration across separate threads is signal.

Before you act on a pain point, count how many times you've seen it stated independently. Set a minimum threshold of three independent sources before you treat it as real. Five is better.

Upvotes, likes, and reply agreement help here. A comment with 200 upvotes that says "I hate how X works" is telling you that 200 people felt strongly enough to click — that's the closest thing to frequency validation you'll find in unstructured community data.

Capture Their Exact Words — Not a Paraphrase

This is the most important step most founders skip.

When someone writes "I've been manually opening 30 tabs every week just to keep up with what's happening in my space," copy that sentence verbatim. Don't summarize it as "user finds manual research tedious."

The exact phrasing is what matters. Those are the words that belong in your landing page headline, your onboarding copy, your email subject lines. When you reflect customers' exact language back at them, conversion rates climb because they feel seen. Paraphrasing loses the emotional charge that makes copy resonate.

Keep a running tracker: subreddit or source, the verbatim quote, and the number of times you've seen the same sentiment expressed. That tracker becomes your positioning library.

Distinguish Pain From Preference

Not every complaint is a business opportunity.

Pain: "I can't do X at all" / "X takes me three hours every week and I hate every minute" / "I've tried four tools and none of them solve this."

Preference: "It'd be nice if the UI had dark mode" / "I wish the export included a CSV option."

Build for pain first. Preferences are nice-to-have features that rarely drive purchase decisions. Pain is what makes someone switch tools, pay for a new solution, or manually build a workaround they've been using for months.

The workaround is the clearest signal of all. When you see people describing a manual, cobbled-together process they've built to solve a problem — that's an unmet need with no product filling it. Build that product.

A quick test for ambiguous cases: if this problem disappeared tomorrow, would someone pay to bring it back? Time-tax pain (hours wasted weekly) and financial pain (money lost or overpaid) pass this test. Dark mode and CSV export don't. That's the line between a business and a feature request.

The Problem With Manual Research (And How AI Changes It)

The Time Tax Is Real

If you've tried this workflow manually, you know what it costs: 30 tabs open, hours of reading, notes scattered across Notion, and at the end of it you're not sure if you have enough signal to draw any conclusion.

The average founder spends over four hours per week doing manual audience and competitive research. That's time that isn't going into building, selling, or shipping.

Manual research also suffers from selection bias. When you search Reddit yourself, you unconsciously look for threads that confirm what you already believe. You find three posts that validate your thesis, call it research, and move forward. That's not research — it's self-deception.

Noise Is Getting Worse

AI-generated Reddit posts and bot comments have multiplied. A single spam thread can skew your entire research session if you're reading manually and not filtering carefully. Spotting AI-generated content takes attention and experience — and even then, experienced researchers miss it.

The more you rely on manual reading, the more susceptible your research becomes to bad data.

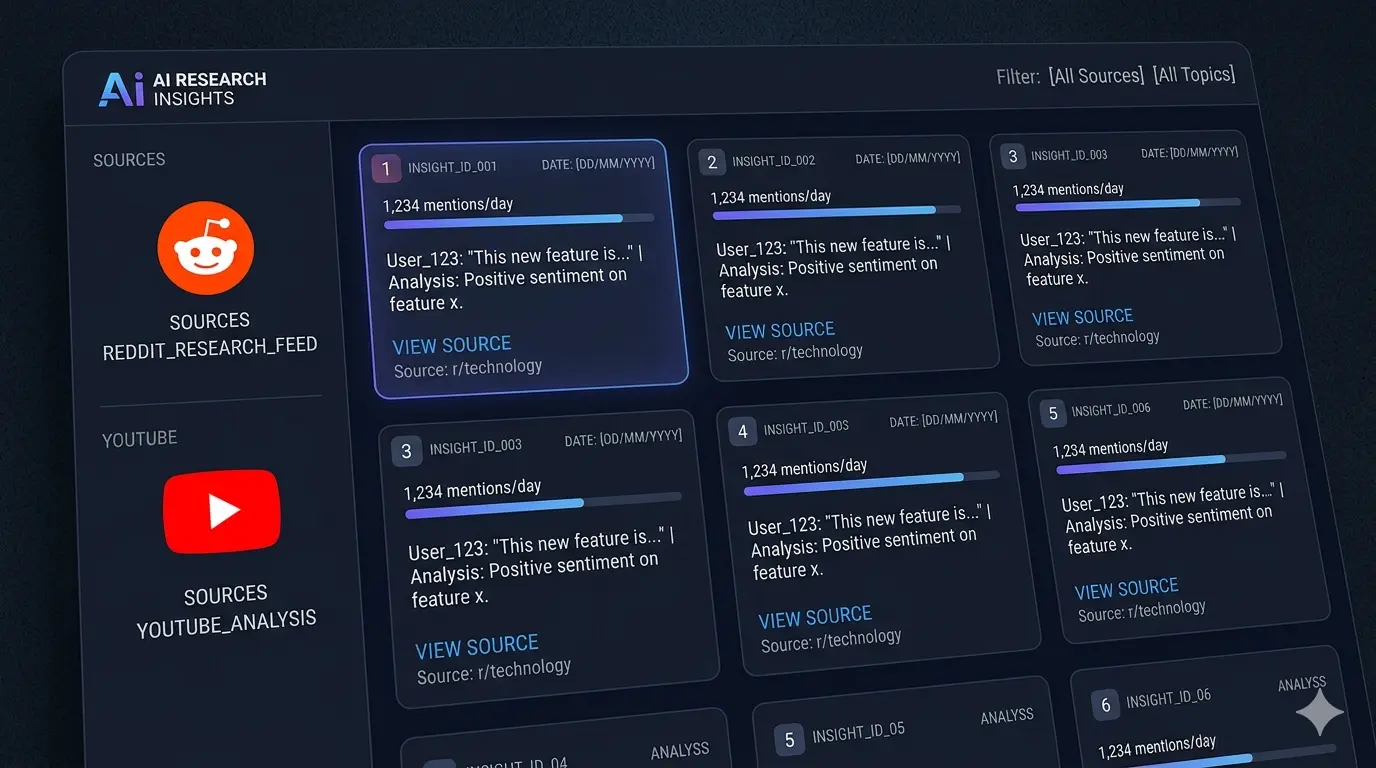

What AI-Powered Research Looks Like

The right tool for community research does four things: it lets you describe what you need in plain language, searches across multiple communities and platforms simultaneously, filters out spam and AI-generated noise before you see any results, and returns ranked pain points with direct links to the original source posts.

That last part matters. Every insight needs to be traceable. If you can't click through to the original Reddit thread, you can't verify the data, and you can't show it to a co-founder or investor as evidence.

Reddinbox works this way — it's a research agent that takes a plain-language description of what you want to understand, searches Reddit and other communities on demand, filters out bots and low-quality posts, and surfaces ranked pain points with full citation trails. Research that used to take three to six hours of manual scrolling is done in minutes, and the output is specific enough to act on. If you want to see how it compares to doing this research manually, see The Best Customer Research Methods in 2026.

From Pain Points to Product Decisions

Validate Before You Build

Once you have 8–10 pain points documented with frequency counts, validate the top three before touching your roadmap. If you're fully pre-PMF, How to Validate Startup Ideas on Reddit covers the full signal-reading framework for reading buying intent from community data.

Validation doesn't require interviews. Check if people are actively searching for solutions — Google Keyword Planner will tell you if "automate Reddit research" gets 1,000 monthly searches or zero. Also check what people are typing into ChatGPT and Perplexity: the "People Also Ask" box on Google, Reddit search autocomplete, and AnswerThePublic all surface real queries people are using to find solutions right now. A high-volume search query for a problem means people have accepted the pain is real and are actively looking for a way out.

Check if they're paying for workarounds: if someone built a manual spreadsheet system for a problem, they're signaling willingness to pay to solve it. That revealed behavior is worth more than any answer to "would you pay for this?" — people lie when asked hypothetically, but their time and money tell the truth.

If both conditions are true — people are searching for solutions and using workarounds — you have a validated pain point worth building around.

Use Pain Points to Write Better Copy

The fastest landing page improvement most founders can make is replacing their own language with their customers' language.

Take the most emotionally loaded phrase from your research and test it as a headline. If ten people described their problem as "I've been doing this manually for months and I hate it," your headline might be: "Stop doing it manually." That phrase will resonate in a way that "streamline your research workflow" never will.

This is the compounding benefit of pain point research that most advice skips: the output isn't just product decisions. It's the exact copy that makes someone feel seen when they land on your page.

Keep a Running Log

Markets shift. Pain points evolve. The complaint that dominated Reddit in January may be solved by February if a competitor ships the right feature. Positioning that worked at $0 MRR may need updating at $10k.

Set a recurring time each week to add new findings to your tracker. After four weeks, look for shifts: did a new pain category emerge? Did the frequency of an existing one increase? Those shifts are signals worth acting on before your competitors notice them. If you want this monitoring automated, Buying Signals runs 24/7 across Reddit, X, and Hacker News and delivers matched conversations as they happen. For a full weekly research system, see How to Use Reddit for Market Research.

Frequently Asked Questions

How do you find customer pain points without customer interviews?

Community platforms are the answer. Reddit, YouTube comment sections, G2 and Capterra reviews, X, and niche Slack communities contain millions of unsolicited, unfiltered complaints from people with no incentive to be polite. You don't need to recruit anyone or schedule a call — you need to find where your target customers vent and read at scale. The five channels in this guide are the starting point; the main skill is learning to distinguish frequency from noise.

How do I know if a pain point is real or just a mild preference?

Apply the disappear test: if this problem vanished tomorrow, would someone pay to bring it back? Time-tax pain (hours wasted weekly) and financial pain (money lost, tools overpaid, revenue missed) pass this test. UI preferences — dark mode, different export format, slightly better onboarding — don't. The strongest signal is the workaround: if someone built a manual, cobbled-together process to solve a problem, the pain is real enough that they already invested time in a solution.

How many independent sources do I need before a pain point is validated?

Three is the minimum before you take a pain point seriously. Five independent sources — different people, different threads, different time periods — is better. Upvotes amplify this: a comment with 200 upvotes represents 200 people who felt strongly enough to click. One emotionally charged post is anecdote. The same complaint appearing across five separate threads, started by different users at different times, is a validated pain point.

What do I do when different customers describe completely opposite pain points?

Opposite pain points almost always signal an ICP segmentation problem, not a research problem. Segment your sources by company size, role, or use case before comparing signals. A solopreneur and an enterprise buyer using the same tool will describe entirely different frustrations with it — they're not the same customer. Pick one segment, go deeper into their specific communities, and commit. Averaging signals across incompatible customer profiles produces a product nobody wants.

How do you identify pain points in a niche or "boring" established industry?

Niche subreddits and vertical Slack communities are the best starting point. People in legacy-heavy industries often describe pain in terms of workarounds they've been running for years — "we've been manually exporting this to Excel every Friday since 2019." They've normalized the friction so completely they don't call it a problem anymore. Look for the "we've always done it this way" phrasing — that's the signal. The longer a workaround has existed, the stronger the unmet need it represents.

Can AI personas or synthetic users replace real early-stage customer research?

No — and the reason matters. Synthetic users reflect the training data they were built from, which is generic, often outdated, and skewed toward what's already been written about online. They can't surface the specific, candid frustrations of a niche audience in 2026. What AI can do productively is help you search and synthesize real human community data at scale — filtering noise, ranking frequency, and surfacing source links. That's a fundamentally different task, and one where AI adds genuine leverage over manual research.

How do I find what people are actively searching for to confirm a problem exists?

Two methods work together. Google Keyword Planner shows monthly search volume for problem-aware queries — if "automate reddit research" gets 2,000 searches a month, that's active demand confirmation. The "People Also Ask" box, Reddit search autocomplete, and AnswerThePublic surface the exact questions people are typing to find solutions right now. Pair keyword data with community signal: if people are both searching for a solution and posting about the same frustration on Reddit, you have strong triangulated confirmation the pain is real.

Real customer pain points aren't hidden. They're in the communities your customers already use, stated clearly, in their own words, with frequency signals you can count.

You don't need customers to find them. You need the right channels and a system for distinguishing signal from noise.

Start with one subreddit this week. Read 20 threads. Copy the five most emotionally charged phrases you find into a document. That document is the beginning of your positioning.

When you're ready to stop doing that manually, try Reddinbox free — it runs the same research across communities on demand, filters noise automatically, and hands you ranked insights with source links in minutes.